Thank you to everyone who attended my webinar last week! In that session, I went through all the steps to install SQL Server to be able to use Hadoop, but I thought it might also be a good idea to include them here so you don’t have to watch the video.

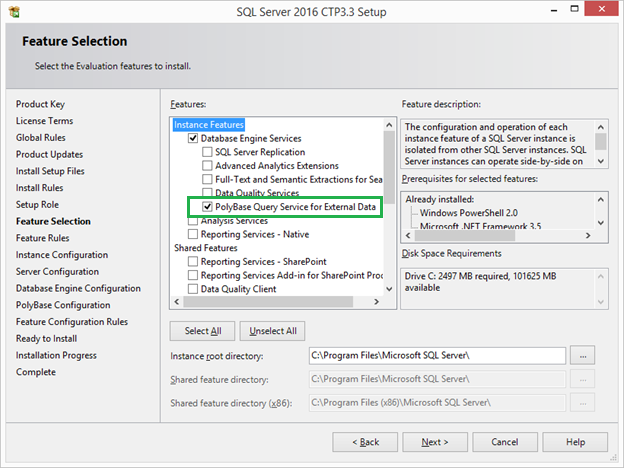

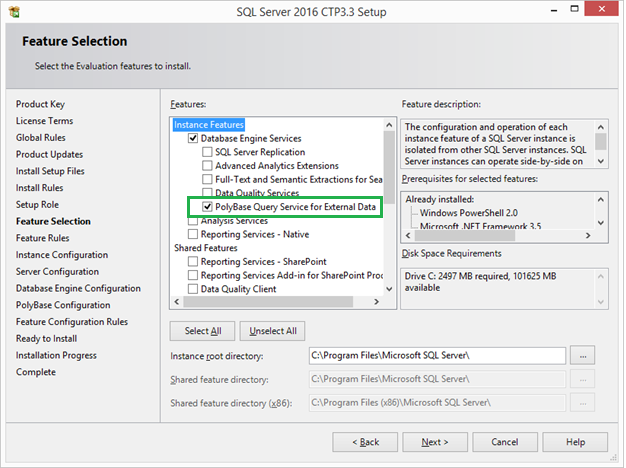

Polybase Install Feature

When installing SQL Server in the Feature Selection List shown below, PolyBase Query Service for External Data must be selected.

To check to see if polybase has been successfully installed, go to Control Panel->Administrative Tools->Services. There are two services added for polybase, SQL Server PolyBase Data Movement and SQL Server Polybase Engine, as well as a bunch of other new ones for SQL Server 2016. The polybase services and SQL Server will need to be restarted in a later step. When starting SQL Server Management Studio for SQL Server 2016, it is hard to spot the differences between it and previous versions of SQL Server as even the icon looks exactly the same. Once you create a database for SQL Server, you will notice a slight difference under the tables folder. When polybase is installed there are two new folders, External Tables and External Resources. Data which is accessed via polybase will be stored under the External Files folder and the External Resources will contain the references to the external resources, the HDFS cluster where the data is stored, as well as the file formats of the underlying data.

To check to see if polybase has been successfully installed, go to Control Panel->Administrative Tools->Services. There are two services added for polybase, SQL Server PolyBase Data Movement and SQL Server Polybase Engine, as well as a bunch of other new ones for SQL Server 2016. The polybase services and SQL Server will need to be restarted in a later step. When starting SQL Server Management Studio for SQL Server 2016, it is hard to spot the differences between it and previous versions of SQL Server as even the icon looks exactly the same. Once you create a database for SQL Server, you will notice a slight difference under the tables folder. When polybase is installed there are two new folders, External Tables and External Resources. Data which is accessed via polybase will be stored under the External Files folder and the External Resources will contain the references to the external resources, the HDFS cluster where the data is stored, as well as the file formats of the underlying data.

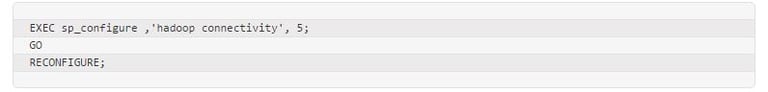

Configuring Polybase on SQL Server

SQL Server needs to be configured to use polybase by using the sp_configure command:

The number 5 indicates the kind of Hadoop connectivity desired. If you were using HDInsight’s Azure Blob storage or Hortonworks on Windows, the number listed would be 4. The number 5 indicates connectivity to Hortonworks Data Platform on Linux. Go ahead and exit out of SQL Server as you will be restarting it soon.

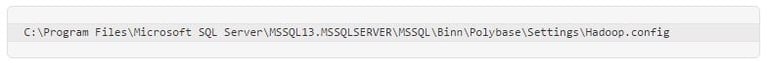

Configuration File Modification

Open up your favorite text editor to modify the Hadoop.config file. The default location is:

Keep in mind, if you installed SQL Server with a named instance the path will include the name of that instance. The config file contains a default password for Hadoop. The password, which is initially set to pdw_user is probably a holdover from pre-2016 days when polybase was only available on Microsoft’s Big Data Appliance, the Analytics Platform System [APS] which was previously called Parallel Data Warehouse [PDW]. Hortonworks’ default password is hue, so you will want to modify the file so that the HadoopUserName has the right password, hue. I circled it below in a clip of the Hortonworks.config file.

Once the changes to the Hadoop.config file are saved, to get polybase to work, the last thing which must be done is to restart the two new polybase services and SQL Server 2016.

Setting up the External Data Source and External Data Files

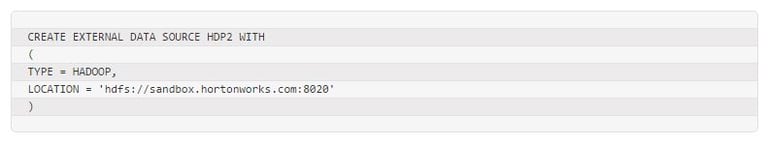

At this point, we can now tell SQL Server where the Hadoop files are loaded. Open SQL Server Management Studio [SSMS] and create a new query to create the new an external data source, which is going to appear in the new folder, External Data Source.

After running this command and refreshing, the source HDP2 will appear in the folder External Data Source ->Data Sources.

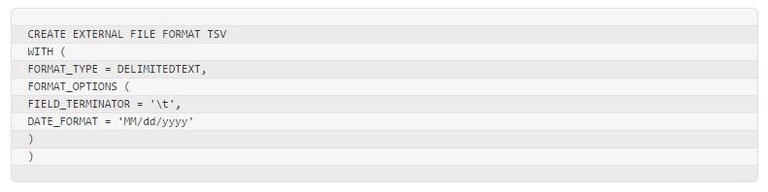

SQL Server needs to know what the underlying data file formats of the data stored in Hadoop. Assuming the data is stored in a tab delimited format, this command will tell SQL Server how to read the tab delimited data and the format of the dates. This will allow polybase to read a sample file which comes with Hortonworks, the file SAMPLE_07:

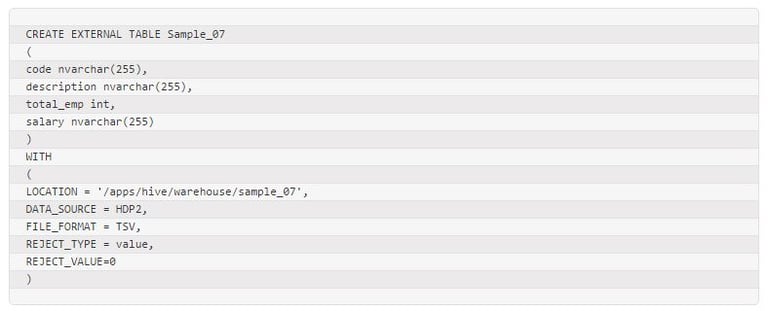

SQL Server needs a schema to read the data, as it doesn’t know where the fields are without it. The following code creates a table which will appear under the External Tables folder in SSMS as well as load the data by telling it where the data lives and to use the file format which we just added.

After this step is complete, you can use the new table to join to data on SQL Server from inside a HDFS cluster.

I hope this helps you get started with using polybase with SQL Server 2016. Let me know what you think by posting a comment.

Sign-up now and get instant access

ABOUT THE AUTHOR

Free Trial

On-demand learning

Most Recent

private training

-1.png)

Leave a comment